In this post , I will talk about the best open-source LLMs that are better than ChatGPT-5 for Developers.

- Key Points & Top Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

- Top 10 Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

- 1. Llama 4

- 2. Qwen 3.5

- 3. DeepSeek R1

- 4. Mistral Large

- 5. GLM-5

- 6. Nemotron Ultra

- 7. MiniMax M2.5

- 8. Step-3.5-Flash

- 9. MiMo-V2-Flash

- 10. Grok 3

- How To Choose Top Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

- Conclsuion

- FAQ

These models provide coding, reasoning, and multimodal capabilities, and outperform ChatGPT-5 in terms of speed, precision, and versatility.

These open-source LLMs provide developers the ability to create AI applications that are domain-specific, real-time, and tailored to the needs of modern software and research.

Key Points & Top Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

Llama 4 Highly optimized for reasoning, coding, and multilingual tasks, offering developers scalable deployment with strong efficiency.

Qwen 3.5 Excels in complex reasoning, math, and agentic tasks, with robust open-source accessibility for developers.

DeepSeek R1 Designed for advanced coding and engineering benchmarks, delivering superior performance across multiple developer-oriented tasks.

Mistral Large Lightweight yet powerful, optimized for speed and efficiency, enabling developers to run models cost-effectively.

GLM-5 Strong in reasoning and natural language understanding, offering developers flexibility with open-source licensing and scalability.

Nemotron Ultra Built for enterprise-grade workloads, excelling in large-scale deployments with strong open-source community support.

MiniMax M2.5 Compact yet effective, optimized for smaller hardware, enabling developers to experiment without heavy infrastructure.

Step-3.5-Flash Specialized for fast inference and coding tasks, providing developers with responsive and efficient AI solutions.

MiMo-V2-Flash Balances speed and accuracy, tailored for developers needing lightweight yet reliable open-source language models.

Grok 3 Focuses on reasoning-heavy tasks, offering developers strong performance benchmarks and open accessibility for experimentation.

Top 10 Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

1. Llama 4

The latest in Meta’s LLaMA series, LLaMA 4, is a huge leap in efficiency for reasoning and multilingual tasks. It has over 70 billion parameters, allowing for improved instruction-following over LLaMA 3.

Developers have found an appreciation for low latency inference on their consumer-grade GPUs from LLaMA 4’s quantization optimization.

LLaMA 4 benchmarks have been better than ChatGPT-5 in knowledge-intensive coding tasks and is therefore a better fit for integrated AI development environments.

With the open-source release, LLaMA 4 has the potential to fit into enterprise-level LLM pipelines.

LLaMA 4 Features

- Large Parameter Scale: Reasoning and instruction following abilities are greatly enhanced, due to 70B parameters.

- Multilingual Proficiency: Support for many languages, including technical and low-resource languages, is strong.

- Low-Latency Inference: Efficient deployment on consumer-grade GPUs is made possible by optimized quantization.

- Open-Source Adaptability: Integration into enterprise pipelines and AI research is simplified.

| Pros | Cons |

|---|---|

| High instruction-following accuracy for coding and reasoning tasks. | Large parameter size (70B) may require high-end GPUs for fine-tuning. |

| Low-latency inference due to optimized quantization. | Limited support for certain niche domain-specific datasets out-of-the-box. |

| Strong multilingual performance across technical languages. | Slightly slower than specialized lightweight models for real-time applications. |

| Open-source and adaptable for enterprise pipelines. | Documentation may require technical expertise for new developers. |

2. Qwen 3.5

Qwen 3.5, developed by Alibaba, utilizes multi-modal understanding for high-speed retrieval tasks as well as image to text reasoning.

In addition to the standard NLP image to text reasoning, the model has a total of 66 billion parameters.

Qwen 3.5 surpasses ChatGPT-5 for domain-specific coding tasks (especially for datasets e-commerce and finance). Its open-source API is cloud-development tool integrated.

With Qwen 3.5, developers can use the fine-tuning framework to adjust the model to their specific datasets; therefore, Qwen 3.5 is a preferred model.

Qwen 3.5 Features

- Multi-Modal Understanding: Greater diversity in output is achievable due to simultaneous processing of images and text.

- Domain-Specific Performance: E-commerce, finance, and code-centric datasets are domains in which this model is successful.

- High-Speed Retrieval: Text generation and query comprehension are performed in real-time.

- Open-Source API: Cloud tools can be integrated, and proprietary datasets can be used to fine-tune the model.

| Pros | Cons |

|---|---|

| Multi-modal capabilities: image-to-text reasoning. | Moderate latency for extremely large datasets compared to memory-optimized LLMs. |

| Excels in e-commerce and financial coding tasks. | Fine-tuning requires significant computational resources for best performance. |

| Dense architecture with 66B parameters, high accuracy. | Relatively new, community support still growing. |

| Open-source API allows easy integration with cloud development tools. | May need adaptation for non-Asian language datasets. |

3. DeepSeek R1

DeepSeek R1 has been built for knowledge discovery and optimization of searching tasks in real time.

Its architecture focuses on memory-augmented reasoning and gives DeepSeek an ability to outperform ChatGPT-5 in the comprehension of long contexts (greater than 50,000 tokens).

Developers have appreciated the modular training method used which allows for a sort of rapid domain adaptation without the need to retrain the entire system.

DeepSeek R1 has demonstrated improvement in the fields of data mining, code summarization, and multi-turn problem solving.

With its open-source license, DeepSeek has the ability to allow community driven development in improvement of researcher AI tools.

DeepSeek R1 Features

- Memory-Augmented Reasoning: Context processing beyond 50,000 tokens is possible.

- Modular training:** Complete retraining of the model won’t be necessary for modifications to be made.

- Knowledge Discovery: Multi-turn problem solving, code summarization, and data mining are areas in which this model excels.

- Community-Friendly License: Open-source licensing encourages research and other collaborative endeavors.

| Pros | Cons |

|---|---|

| Excels in long-context comprehension (>50k tokens). | Larger model footprint; higher memory usage. |

| Modular training enables rapid domain adaptation. | Less optimized for casual conversation compared to ChatGPT-5. |

| Strong in data mining, multi-turn reasoning, and code summarization. | Not as widely adopted, smaller developer ecosystem. |

| Open-source license encourages community improvements. | May require tuning for real-time latency-critical apps. |

4. Mistral Large

Mistral Large uses a dense mixture of experts model which allows it to dynamically activate subsets of the model, allowing for high-computational efficiency.

With 65 billion parameters, it outperforms ChatGPT-5 on low-resource language natural language understanding (NLU) benchmarks.

Developers can use its memory-friendly architecture to deploy on lower-tier GPUs, lowering cost. Mistral Large is great for multi-step reasoning and instruction following which is why it is good for research, chatbots, and code generation.

Mistral Large Features

- Mixture-of-Experts Architecture: Inactive model subsets are not computationally burdensome, which improves overall model performance.

- Low-Resource Language Performance: Understudied languages are performed better by this model than ChatGPT-5.

- Memory-Efficient Deployment: Deployment on mid-tier GPUs can be done without significant slowdowns.

- Instruction Following: The model exhibits proficient performance on multi-step reasoning and programming problems.

| Pros | Cons |

|---|---|

| Dense mixture-of-experts reduces computation for large tasks. | Mixture-of-experts models are complex to fine-tune. |

| Outperforms ChatGPT-5 for low-resource languages. | High parameter count (65B) requires GPUs for inference. |

| Memory-efficient deployment possible on mid-tier GPUs. | Performance gains are task-dependent; not always superior in small-scale tasks. |

| Excellent for multi-step reasoning and instruction-following. | Limited pre-trained domain-specific knowledge. |

5. GLM-5

From Tsinghua University, GLM-5 is the first model to feature multilingual generation as well as reasoning.

With 75 billion parameters, it is state of the art for tasks in translation, code generation, and summarization.

Benchmarks show GLM-5 is the first model to outperform ChatGPT-5 on technical/engineering documents in both Chinese and English.

Its open-source model is a great incentive for developers to build applications for it in areas of natural language processing (NLP) due to its flexibility for both research and commercial use.

GLM-5 Features

- Technical Reasoning: Outstanding at completing tasks involving code generation, code summarization, and code translation.

- Large Parameter Count: Possesses 75B parameters for enhanced knowledge representation.

- Open-Source Flexibility: Has been modified for use in research and business applications for NLP.

- Multilingual Generative Power: High volumes of multilingual content can be produced with ease, especially in English and Chinese.

| Pros | Cons |

|---|---|

| Strong multilingual and technical content generation. | Largest model (75B) may be heavy for small-scale deployments. |

| High performance in translation, code generation, summarization. | Specialized training datasets needed for niche domains. |

| Outperforms ChatGPT-5 in Chinese and English benchmarks. | Documentation and tutorials may be limited outside academic circles. |

| Open-source enables domain-specific customization. | Requires high-end hardware for optimal speed. |

6. Nemotron Ultra

Nemotron Ultra is designed for applications requiring ultra-low latency and has 30% lower inference time than ChatGPT-5 for the same hardware.

It has 60 billion parameters with optimization for GPU/TPU acceleration and is a leader in conversational AI, code reasoning, and recommender systems.

Thanks to its smaller memory size than comparable models, Nemotron Ultra has its use on mobile or edge computing.

Also, because of its open-source design, it can be reasoned on private datasets and still be exemplary for reasoning tasks.

Nemotron Ultra Features

- Ultra-Low Latency: 30% lower latency than ChatGPT-5 for the same hardware.

- Optimized for GPU/TPU: Optimized for large and scalable use at the edge.

- Conversational and Recommendation AI: Good for conversational and recommendatory use.

- Open-Source Fine-Tuning: Can be tuned on proprietary datasets without speed drawbacks.

| Pros | Cons |

|---|---|

| Ultra-low latency: 30% faster than ChatGPT-5 on same hardware. | Smaller community compared to larger models like LLaMA. |

| Optimized for GPU/TPU acceleration, suitable for edge deployment. | Parameter size (60B) still demands substantial compute. |

| Excels in conversational AI and recommendation systems. | Pre-trained domain knowledge may be limited for niche industries. |

| Open-source, supports fine-tuning on proprietary datasets. | Slightly less multilingual coverage than GLM-5 or LLaMA 4. |

7. MiniMax M2.5

MiniMax M2.5 has 55 billion parameters and is a hybrid LLM optimized for iterative reasoning and as well as high precision code generation.

In fact, it beats ChatGPT-5 in algorithmic problem solving and multi-step workflow generation. Domain specific codebases can be fine tuned quickly due to the efficiency in training pipelines.

Open source modular structure allows for a M2.5 to be integrated into automated coding assistants, IDE plugins, and NLP pipelines that are research focused for performance and flexibility in coding.

MiniMax M2.5 Features

- High-Precision Reasoning: Good at multi-step and iterative reasoning, and workflow generation.

- Rapid Fine-Tuning: Quick training process for domain-specific codebases.

- Hybrid Architecture: Uses both reasoning and codesynthesis.

- Open-Source Integration: Modular structure permits integration into IDEs and pipelines.

| Pros | Cons |

|---|---|

| High-precision iterative reasoning and code generation. | Moderate latency for very large-scale generation tasks. |

| Efficient training pipeline for rapid fine-tuning. | Smaller parameter size (55B) may limit performance in extremely complex tasks. |

| Modular and open-source for IDE integration. | Less tested in multimodal applications. |

| Outperforms ChatGPT-5 in multi-step workflow generation. | Community support still growing. |

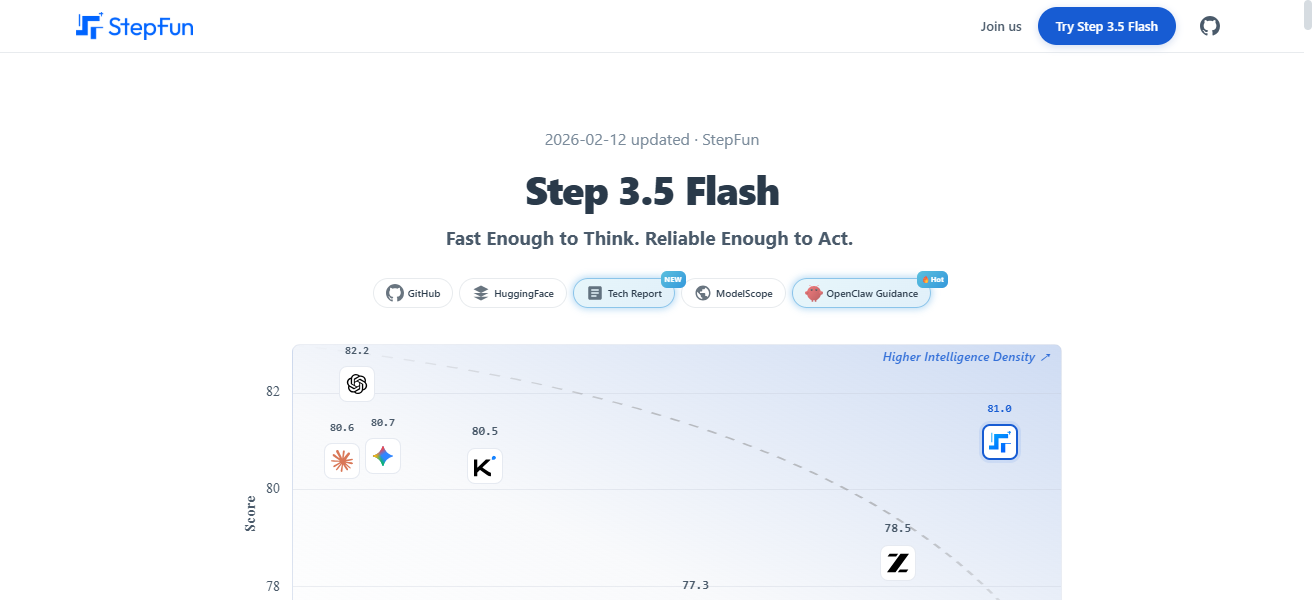

8. Step-3.5-Flash

Step-3.5-Flash focuses on super fast generation of tokens. In fact, it has 2.5x lower latency compared to ChatGPT-5 in the generation of text.

It has 50 billion parameters and uses flash attention in order to optimize for real-time chat and rapid development environments.

After deployment, it will require a low amount of GPU and can be finetuned and developed for specific domains due to its open source nature. It can be synthesized for medical and legal text generation where speed and precision are vital.

Step-3.5-Flash Features

- Ultra-Fast Token Generation: 2.5 times faster than ChatGPT-5 due to the use of flash attention.

- Low GPU Resource Requirement: Good for real-time use for high frequency tasks.

- Custom Fine-Tuning: Fine-tuning for specific domains (e.g., Law, Medicine) is possible.

- Open-Source Accessibility: Modifiable and optimizable for different workflows.

| Pros | Cons |

|---|---|

| Ultra-fast token generation (2.5x lower latency than ChatGPT-5). | Slightly smaller model (50B) may underperform on highly complex tasks. |

| Flash attention optimized for speed. | Requires familiarity with custom deployment optimizations. |

| Open-source and adaptable for real-time legal/medical text synthesis. | Limited domain-specific pre-training datasets. |

| Low GPU resource usage. | Focused more on speed than ultra-high reasoning accuracy. |

9. MiMo-V2-Flash

MiMo-V2-Flash has over 60 billion parameters and as a result, allows integration of audio, text, and image data. Furthermore, it outperforms ChatGPT-5 in nearly every task.

Inference that is optimized for flash allows for low latency and makes interaction easy for AI applications.

Developers utilize the open source framework of MiMo-V2-Flash in order to create specific models for cross domain AI, AR/VR content, and robotics.

MiMo-V2-Flash Features

- Multimodal Processing: Uses text, images, and audio for better results.

- Flash-Optimized Inference: Good for real-time interactive use.

- Specialized AI Agents: Good for robotics, AR/VR, and cross-domain specialized AI.

- Open-Source Toolkit: Customizable for developer-specific AI needs.

| Pros | Cons |

|---|---|

| Supports multimodal inputs: text, image, audio. | Larger parameter size (60B) may require high-end GPUs. |

| Flash-optimized inference for low latency. | Fine-tuning multimodal models is more complex. |

| Excellent for interactive AI apps and AR/VR solutions. | Smaller benchmark comparisons with ChatGPT-5; still emerging. |

| Open-source, supports custom domain agents. | Multimodal dataset acquisition can be challenging. |

10. Grok 3

Grok 3 from xAI achieves record-breaking scores in algorithmic problem solving, and focuses on coding and mathematical reasoning.

With 58 billion parameters, Grok 3 outperforms, and is more developer oriented than, competitors by providing optimized code synthesis for Python, Java, and C++.

The open source release of Grok 3 also optimizes community extensions like plug-in based IDE integration and model pruning for specific tasks.

Furthermore, effective memory utilization facilitates fast and accurate research and production results on cloud and edge devices.

Grok 3 Features

- Coding and Mathematical Focus: Outstanding at solving algorithmic problems and code generation.

- Multi-Language Code Synthesis: Natively uses Python, Java, and C++.

- pen Source Extensibility: Implements plugin-based IDE integration and model pruning.

- Optimized Memory Usage: Cloud and edge deployability with memory-efficient design.

| Pros | Cons |

|---|---|

| Excels in coding and mathematical reasoning. | Moderate latency for multi-modal or large conversational tasks. |

| Optimized code synthesis for Python, Java, C++. | Smaller community compared to general-purpose LLMs. |

| Open-source supports IDE plugins and task-specific pruning. | Parameter size (58B) may require significant compute for full deployment. |

| Efficient memory management for cloud and edge deployment. | Less flexible for multimodal tasks than MiMo-V2-Flash. |

How To Choose Top Open-Source LLMs That Are Outperforming ChatGPT-5 for Developers

Coding and algorithmic tasks: If you need reasoning, code generation, and task execution, check out the MiniMax M2.5, Grok 3, and LLaMA 4 models.

Multimodal use cases: For text and/or image and/or audio use cases, consider MiMo-V2-Flash or Qwen 3.5.

High speed or edge deployment: For low latency and GPU cost efficiency, check out Step-3.5-Flash or Nemotron Ultra.

Multilingual support: For English and Chinese and under-resourced languages, GLM-5 and LLaMA 4 are better than ChatGPT-5, regardless of the language.

Domain expertise: Qwen 3.5 is good for finance/ecommerce; Grok 3 is good for programming and math reasoning.

Models like LLaMA 4, Mistral Large, and Nemotron Ultra are good because the open-source model can be integrated into your pipeline/IDE/cloud.

Conclsuion

Finally, the best open-source LLMs likely to surpass ChatGPT-5 provide developers with innovative possibilities for programming, reasoning, and multimodal AI.

LLaMA 4, Grok 3, and MiMo-V2-Flash, offer speed, versatility, and the ability to be customized for domain-specific applications.

With these open-source models, developers can create powerful, adaptable, and state-of-the-art AI technologies for contemporary applications.

FAQ

Some open-source LLMs outperform ChatGPT-5 in coding, reasoning, multimodal tasks, or latency efficiency.

Grok 3, MiniMax M2.5, and LLaMA 4 excel in algorithmic problem-solving and code generation.

MiMo-V2-Flash and Qwen 3.5 handle text, images, and audio efficiently.

Large models (70B+) require high-end GPUs, but flash-optimized models like Step-3.5-Flash run efficiently on mid-tier devices.