So, here in this article, we will be discussing the Times AI Got It Wrong Famous Hallucination Fails about Real examples where the artificial intelligence generated misleading or even completely false information.

- What are AI Hallucinations?

- Key Poinst & Times AI Got It Wrong Famous Hallucination Fails

- 17 Times AI Got It Wrong Famous Hallucination Fails

- 1. Government Contract Report

- 2. Transcription Tool Fabrication

- 3. Stock Market Drop

- 4. Support Chatbot Policy

- 5. Bogus Reading List

- 6. Fake Legal Cases

- 7. Glue on Pizza

- 8. Medical Advice Error

- 9. Historical Inaccuracy

- 10. Fake Scientific Citations

- 11. Misleading Travel Info

- 12. Wrong Programming Code

- 13. Invented News Stories

- 14. Fake Biographies

- 15. Misquoted Literature

- 17. False Geography Facts

- 18. Fabricated Business Data

- Conclusion

- FAQ

Fake legal cases to false medical advice, each of these instances are a reminder of the weaknesses of artificial intelligence systems.

Recognizing these failures can help users remain alert, fact-check information on platforms, or how to harness AI safely to perform daily work functions and make professional decisions.

What are AI Hallucinations?

AI hallucinations happen when an artificial intelligence system writes untruthful or misleading statements as though they were factual and credible.

This occurs since AI models “respond” to your question based on trained data — they do not check the specific facts in real time.

Consequently, the output may contain lies or incorrect information as well as details that are completely made up but still sound plausible.

This can lead to misinformation, especially considering that it sounds authoritative and thus hard to cross-check, so we need to proceed with caution in trusting AI responses before verification.

Key Poinst & Times AI Got It Wrong Famous Hallucination Fails

Government Contract Report AI confidently produced incorrect math solutions, showing weakness in logical reasoning and calculation accuracy.

Transcription Tool Fabrication Speech-to-text AI invented words not spoken, distorting transcripts and confusing legal or medical records.

Stock Market Drop Chatbot gave wrong financial answer, causing company shares to plummet after investor panic.

Support Chatbot Policy Customer support AI cited a completely made-up company policy, frustrating users and damaging trust.

Bogus Reading List AI generated a summer reading list filled with nonexistent books and authors, misleading educators.

Fake Legal Cases ChatGPT referenced court cases that never existed, misleading lawyers and undermining legal credibility.

Glue on Pizza Smart search recommended putting glue on pizza, a dangerous and absurd culinary suggestion.

Medical Advice Error AI confidently gave wrong medical guidance, risking patient safety with fabricated treatment recommendations.

Historical Inaccuracy AI described events with incorrect dates and fabricated participants, distorting historical understanding.

Fake Scientific Citations AI generated research papers with references to journals and studies that didn’t exist.

Misleading Travel Info AI hallucinated flight schedules and hotel details, confusing travelers with false booking information.

Wrong Programming Code AI produced code snippets that looked correct but failed when executed, frustrating developers.

Invented News Stories AI fabricated breaking news events, spreading misinformation and alarming readers unnecessarily.

Fake Biographies AI created detailed biographies of people who never existed, misleading researchers and readers.

Misquoted Literature AI attributed famous quotes to wrong authors, distorting cultural and literary references.

False Geography Facts AI confidently placed landmarks in wrong countries, confusing students and travelers alike.

Fabricated Business Data AI invented company financials, misleading analysts and damaging credibility in corporate decision-making.

17 Times AI Got It Wrong Famous Hallucination Fails

1. Government Contract Report

One widely publicized example was when an AI wrote a wholly invented mock report about the government contract — fake organizations, budgets and results.

The content appeared professional and convincing, with organized sections and some technical jargon that would be difficult for average non-scientists to detect.

Which exposed an insidious danger: An AI is trained to fake authoritative-sounding prose without the modesty of empirical backing.

That makes hallucinations risky for decision-makers, especially in sensitive domains such as public policy, procurement and governing — all of which require accuracy and verifiability.

2. Transcription Tool Fabrication

Poor audio quality or overlapping speakers can cause AI transcription tools to manufacture words that were never uttered.

Rather than labelling segments as unclear, the system fills in gaps with plausible but incorrect phrases.

This creates false transcripts that can misrepresent meaning, especially in legal, medical or journalistic contexts.

These outputs may be perceived by users as accurate accounts, with fabrications woven into the text.

Well, the situation indicates the need for human review and also that AI systems should indicate they are uncertain rather than blindly guessing at content.

3. Stock Market Drop

AI generated summaries have also reported stock market crashes or major losses in finance that did not actually happen

If so, these hallucinations can create turbulence or panic when rapidly distributed amongst inexperienced investors.

Trends can otherwise be misread, or speculation not distinguished from established fact; the AI may combine old data with such words.

When it comes to financial markets, even the least fraction of incorrect information could impact decision-makings.

This makes it clear that real-time data verification and care of AI mechanisms (and how they give financial insights) will need to be prioritized.

4. Support Chatbot Policy

Occasionally, customer support AI chatbots have created new company rules where no specific information exists.

The first category almost always answers in the affirmative, providing definitive but false replies — perhaps even on refund rules or service limitations that don’t exist.

This causes frustration among users and possibly reputational damage for all businesses. Some people consider chatbots as authoritative sources and this feature combines with misinformation.

Chatbots must be appropriately trained and include safeguards to minimize unsupported claims.

5. Bogus Reading List

Everyone knows that AI has been tripping up by producing reading lists of books that do not exist or are mis-attributed.

Those lists can look legitimate, mixing in authentic titles with falsified ones. This can end up wasting time and creating confusion for students, researchers or casual readers.

The issue here is that AI naturally produce patterns instead of validating facts. It brings to attention the need to cross check suggestions made and how AI can be beneficial/ detrimental for academic or literary purposes.

6. Fake Legal Cases

The most serious hallucination failures includes AI generating fictional legal cases referenced in court documents.

These fictional cases featured fleshed-out information on summaries, judges, and judgements. Lawyers who used AI and did not verify its use experienced professional repercussions.

This event showed how AI could create extremely convincing, though fictitious content which may be particularly evident in a manner which appears structured such as law.

It highlighted how AI should always be validated rigorously before being used in professional and high-stakes settings.

7. Glue on Pizza

One viral instance showed an AI recommending to fix cheese sliding on pizza, a cooking hack that suggested putting glue on the dough.

A serious answer, but clearly absurd, confusing some of the users. This would we good example how AI can creates damaging of even mindless advise when lacking the context about real life.

It highlighted the dangers of accepting AI output without question, in particular in case it relates to health, safety or practical use.

8. Medical Advice Error

There have been instances of AI systems advising incorrect or dangerous medical practices, such as misdiagnosing symptoms or recommending unsuitable treatments.

These errors happen when AI misanalyzes a user input or is missing some context. Even a minor inaccuracy is problematic, as health information is extremely sensitive.

This emphasizes a need to seek professionals and also treat AI as an aid instead of a decision making driver when it comes to healthcare.

9. Historical Inaccuracy

One type of incorrect historical information is inaccurate dates, events, or interpretations generated by AI models.

These inaccuracies may stem from combining a number of sources or guessing where we have no idea. The output may sound authoritative, but that can lead to the wrong concept for learners and researchers alike.

This problem illustrates that AI has no real understanding of history, only generates patterns. Fact-checking is still key when assessing history content.

10. Fake Scientific Citations

AI had previously been shown to produce scientific references that sound plausible but do not exist. This ranges to invented journal names and author and publication details.

These hallucinations can lead researchers astray and undermine academic integrity. The issue is particularly troubling because citations are generally accepted without scrutiny.

It also shows how much users need to refer back and that AI systems should be highly ambiguous when generating academic material.

11. Misleading Travel Info

AI-generated travel recommendations sometimes suggest fictional destinations or attractions that are nonexistent, as well as wrong hours of opening and old information.

Travelers who make their decisions based on such advice could end up in trouble or find it has backfired. That’s a problem because AI does not pull in up-to-the-minute information unless it has access to good data pipelines.

This underscores how vital it is to fact-check travel info against a proper website or company before making plans.

12. Wrong Programming Code

AI generates code which seems to work but has logical errors, uses old syntax and may have vulnerabilities.

For developers who use such code trustingly but without testing, that would be a serious problem. AI, to be clear, can help as a tool for creating templates or ideas

however, it is never correct. It emphasizes the need for debugging, code review and logical understanding than just copy-pasting Ai-driven solutions.

13. Invented News Stories

AI has previously created entire news stories of events that did not occur, including quotes, location and timeline. If they go viral, these false facts kindle disinformation.

They’re so believable in tone and form that they are hard to tell apart from authentic news stories.

But this indicates the inherent dangers of AI in publishing information and how advanced verification systems must be in place.

14. Fake Biographies

Biographies that have been generated by AI may contain inaccurate details about achievements, roles or even personal information pertaining to people.

These errors can harm people and promote fake narratives. The question is: AI does combine bits and pieces of information or uses creative writing (with the little gaps) to fill them in. Users should check biography information through verifiable sources, especially when it matters.

15. Misquoted Literature

Sometimes AI misattributes quotes or even gets famous literature wrong. Such errors can be very misleading for the readers and can wrongly alter the sense or meaning of texts.

As literature is all about the fine details, even a small mistake counts. Thus, where interpretation or quotation of a work is concerned, we will need to refer to an original source.

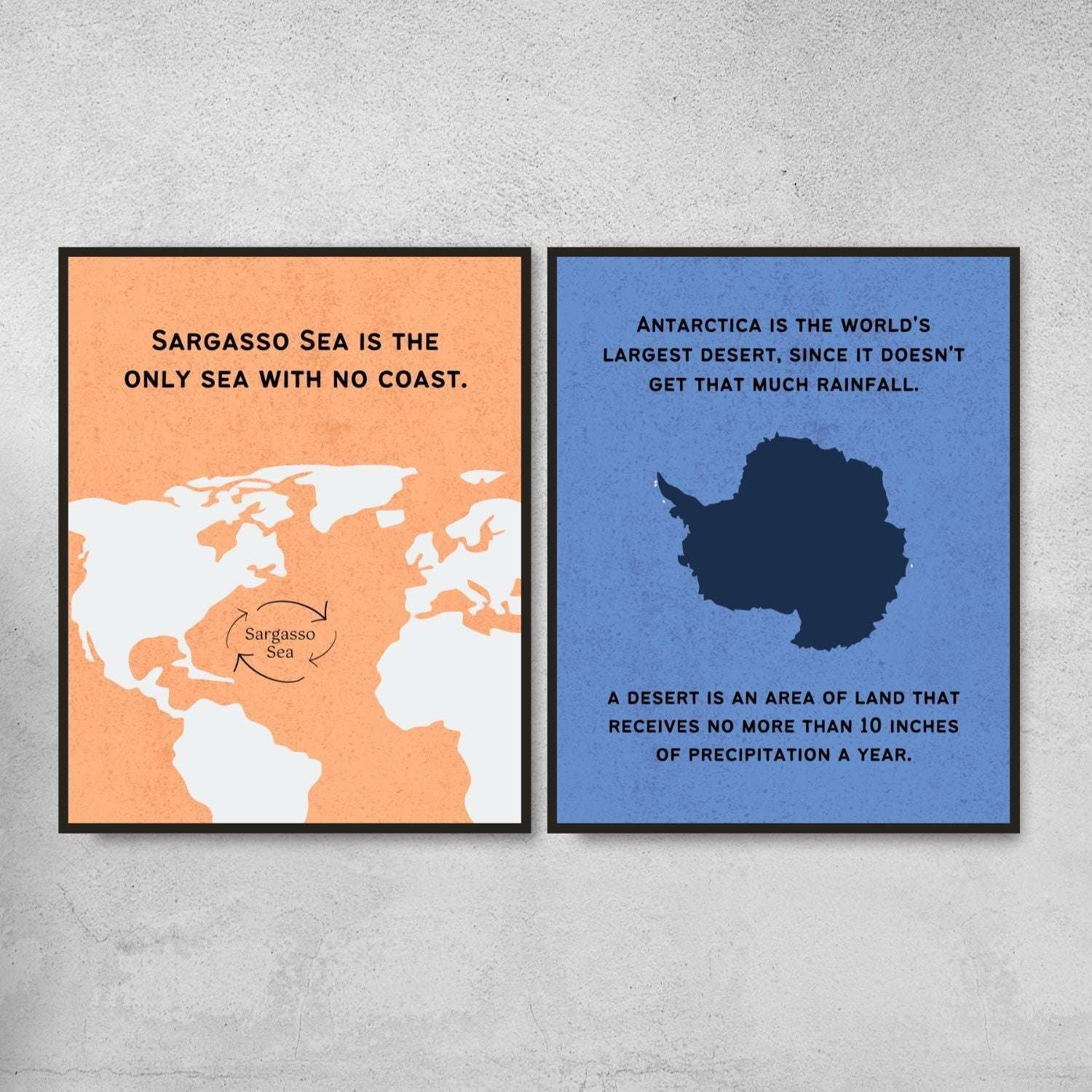

17. False Geography Facts

Geographic knowledgeentailage of AI systems including incorrect capitals, borders or lengths and distances.

Even if these errors may seem trivial, it can impact education and comprehension. That’s an artifact of dealing with incomplete or contradictory data during training time. But users should verify geographical information with credible sources.

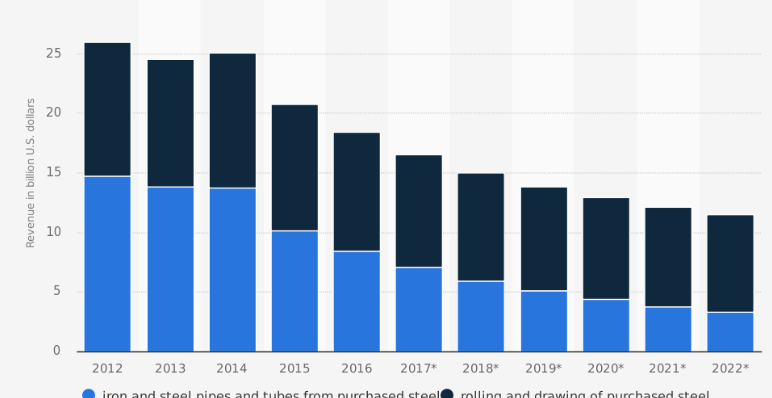

18. Fabricated Business Data

False business statistics like revenue, employee numbers or market positions generated by AI. Misinformation of this nature misdirects investors, researchers and analysts.

This hallucination can be harmful because many business decisions require precise data. So make sure to corroborate any financial or company information via the report and databases that you trust.

Conclusion

In short, here the Times AI Got It Wrong Famous Hallucination Fails also show where artificial intelligence stands despite its explosions.

These mistakes, from false facts to dangerous advice, illustrate the unreliability of AI. People have to cross-check the information by themselves, think twice and not blindly trust.

The way forward is to use AI responsibly whilst continually improving it so that hallucinations are less prevalent and experiences with the framework of AI are more safe and accurate.

FAQ

How did AI create fake legal cases in court filings?

It fabricated case names, rulings, and citations that never existed.

Why does AI sometimes invent books or reading lists?

It mixes real and imaginary titles based on learned patterns.

Can AI generate completely false news stories that look real?

Yes, it can create believable but entirely fictional news reports.

Why did AI suggest adding glue on pizza?

It produced unsafe advice due to lack of real-world understanding.